GPU stands for Graphics Processing Unit. As the name suggests, it was originally designed for one purpose: rendering graphics. As computers became powerful enough to run video games with realistic visuals, engineers realised that standard processors (CPUs) were not built for the job.

A CPU is highly precise and capable of complex sequential reasoning, but it works on one task at a time. A GPU, by contrast, operates like a large factory floor with thousands of workers performing simple, repetitive tasks in parallel. That architecture proved ideal for graphics workloads, where millions of pixel values must be computed simultaneously, and it is the same foundation behind the NVIDIA H100 and B200 available through Impossible Cloud Network BMaaS.

Matrix multiplication as the computational basis for modern AI workloads

GPUs are ideally suited for training and running AI models because they excel at the high-speed, simultaneous multiplications that modern AI requires. The fundamental operation in AI, Matrix Multiplication, is mathematically the same class of problem as rendering a video game frame.

Modern GPUs contain specialised components called Tensor Cores, engineered specifically for the matrix mathematics involved in AI. Their performance advantage over standard processors in this domain is substantial.

Impossible Cloud Network operates a fleet of NVIDIA H100 and B200 GPUs available as bare metal GPU-as-a-Service (BMaaS). Both systems are purpose-built for the inference and training workloads described in this article.

High Bandwidth Memory Capacity Constraints in Large Model Deployment

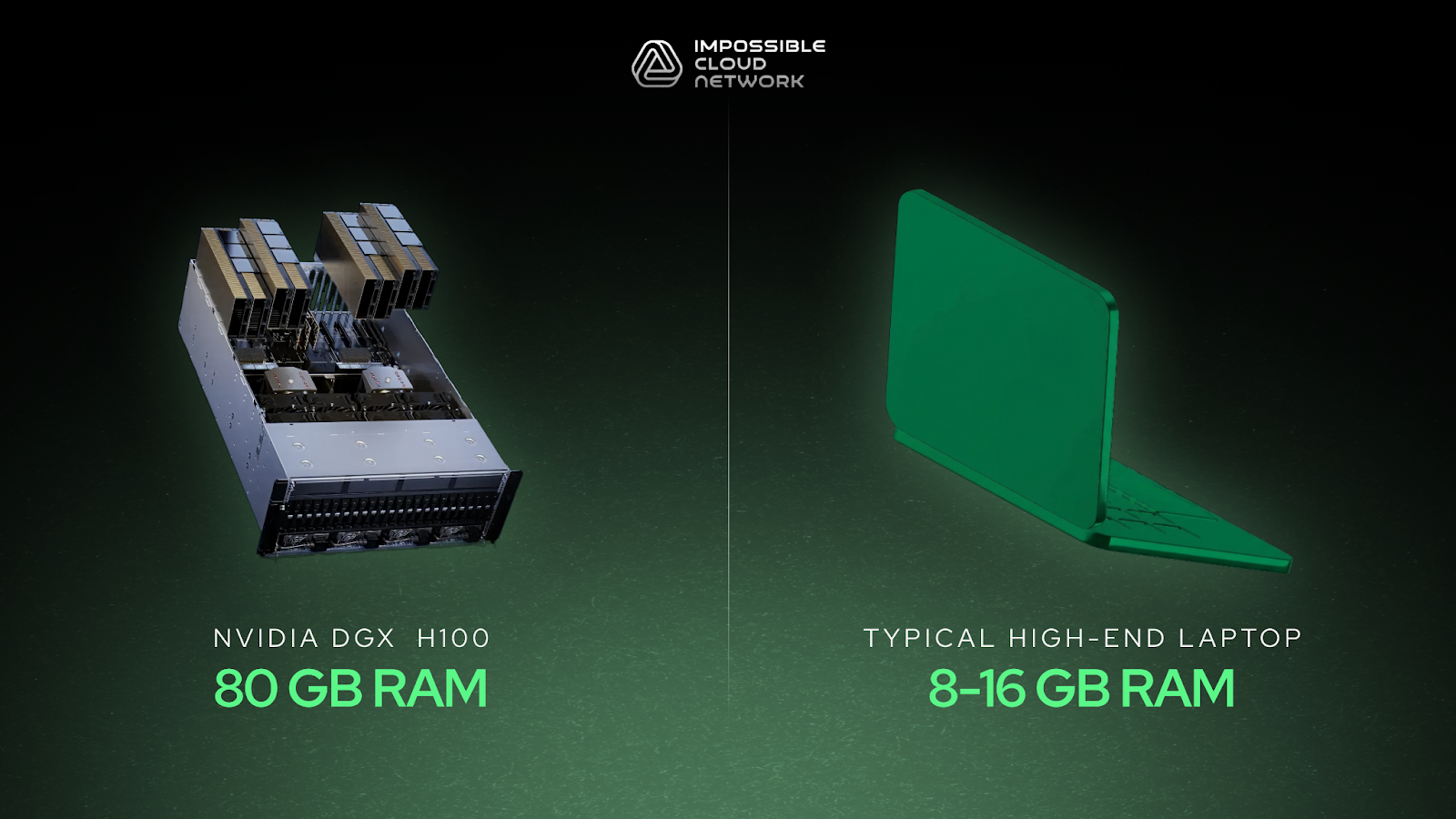

Every GPU includes its own high-speed memory, known as High Bandwidth Memory (HBM). A single high-end AI chip such as the NVIDIA H100 carries 80 gigabytes of HBM. For context, most consumer laptops have between 8 and 16 gigabytes of standard RAM.

This memory is critical because an AI model must reside entirely in GPU memory during operation; every parameter learned during training must be accessible instantly. A modern large language model can require hundreds of gigabytes simply to load, which is why multi-GPU configurations are standard in production environments.

The NVIDIA B200, the current-generation successor to the H100, delivers at least double the memory bandwidth and compute throughput, representing a meaningful step forward for high-demand inference deployments. Both the H100 and B200 are available on the Impossible Cloud Network website.

Interconnected architecture and throughput bottlenecks in multi-node GPU Clusters

A single GPU node, typically eight cards connected together, is already a formidable system. However, modern AI models are large, and simultaneous user demand means even eight cards working together can saturate quickly.

This is where AI data centres come in. A rack is a tall metal enclosure filled with compute trays, each holding eight GPUs. Multiple interconnected racks allow AI providers to serve millions of users concurrently while keeping model weights distributed across all chips in memory.

The speed at which cards communicate with one another proves just as important as the raw compute speed of the cards themselves. GPUs on the same tray communicate at up to 900 gigabytes per second via NVLink. Inter-tray communication, handled by InfiniBand or equivalent interconnects, runs at roughly half that speed, and this gap frequently becomes the primary throughput bottleneck in production deployments. Impossible Cloud Network deploys H100 and B200 BMaaS nodes with the high-speed fabric required to minimise this constraint.

Prefill, decode, and hardware resource constraints in inference workloads

Every prompt sent to an AI model triggers what is called an inference workload. The process unfolds in two stages, and the underlying hardware, such as the NVIDIA H100 and B200 on Impossible Cloud Network BMaaS, determines how efficiently both complete.

In the prefill phase, the model tokenises the input prompt and processes all tokens in parallel to establish context. Each token is mapped to a position within a high-dimensional coordinate space (typically thousands of dimensions) where proximity encodes semantic relationships between concepts.

In the decode phase, the model generates output tokens sequentially. For each token, it traverses all model layers (commonly 80 or more in large-scale models), aggregates attention across the full context window, and predicts the most probable next token. Each generated token is appended to the context window and stored in HBM, incrementally increasing memory consumption.

This sequential nature makes inference computationally intensive. Several factors govern the cost and latency of a given workload:

- Model precision: The industry has largely converged on 8-bit precision for inference. Reducing from 32-bit to 8-bit halves memory requirements twice over, with minimal accuracy degradation for most tasks. Both the H100 and B200 Tensor Cores are optimised for 8-bit computation.

- Context window size: Longer inputs, uploaded documents, and extended chat histories all consume HBM. A system must hold both model weights and the full context for every concurrent user in memory simultaneously.

- Batch size: Inference systems maximise GPU utilisation by batching multiple users together, sharing model weights across the node while processing token predictions in parallel. The limiting factor is typically interconnect bandwidth, not raw compute, which is why NVLink speeds and inter-node fabric quality directly affect tokens-per-second output.

- Inter-node scaling: When a workload exceeds the capacity of a single node, traffic must traverse the inter-node fabric. This secondary bandwidth constraint becomes the ceiling on throughput at scale.

By 2030, inference workloads are projected to account for up to 60% of total global GPU usage. Selecting the right infrastructure, including GPU generation, memory capacity, and interconnect architecture, has a direct impact on cost per million output tokens and end-to-end response latency.

Impossible Cloud Network provides NVIDIA H100 and B200 bare metal GPU access designed for exactly these workloads. Whether deploying a latency-sensitive production inference stack or running high-throughput batch processing, both platforms are available without virtualisation overhead.

If you are evaluating GPU infrastructure for your AI deployment, get in touch to discuss your specific configuration requirements.

.webp)

.webp)

.svg)

.webp)